Design and Evaluation of Visual Feedback

for Virtual Grasp

|

Mores Prachyabrued, Faculty of ICT, Mahidol University Christoph W. Borst, Center for Advanced Computer Studies, University of Louisiana at Lafayette |

Abstract |

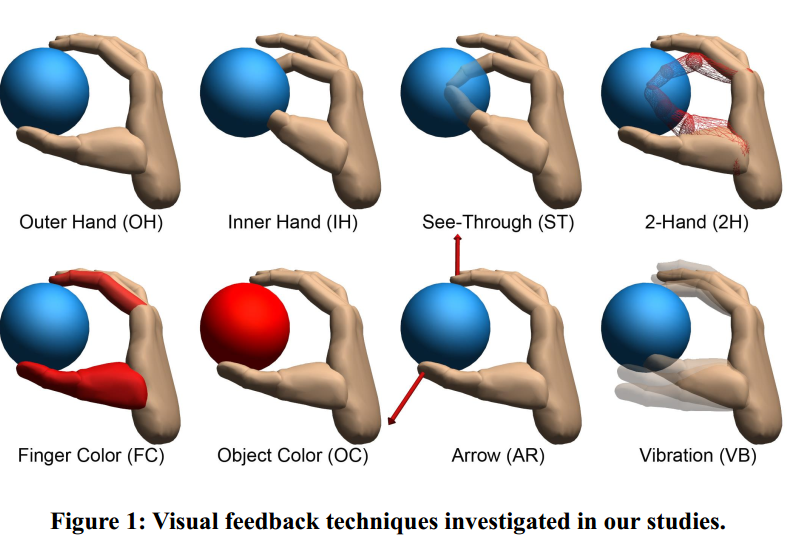

| We tuned and evaluated visual feedback for virtual grasps. Prior work on such feedback has been largely ad-hoc, without substantial guides for technique selection. Techniques included both standard and novel aspects (Figure 1). |

|

Interpenetration Problem |

| Penetration between real hands and virtual objects contributes to artifacts such as a sticking object when exaggerated finger motions are required for release, degrading performance and subjective experience. Users may reduce such problems using light touch. Visual cues may help a user understand and control this light touch. |

Experiment Overview |

|

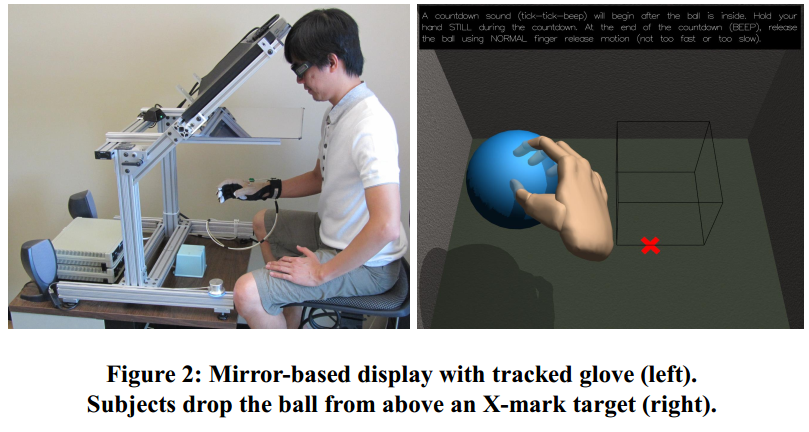

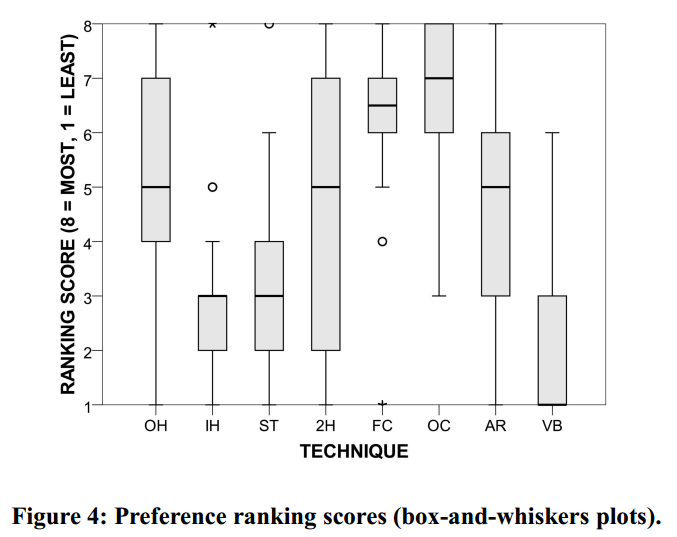

Pilot study: 5 subjects tuned cue parameters per technique (e.g., transparency level, rate of color change) with a goal to “encourage light touch”. Main study: 30 subjects picked up and dropped a ball repeatedly in the environment shown in Figure 2. A performance study measured finger penetration, release time, and error. Subjects later ranked techniques. |

|

|

Main Results |

|

Performance: The best-performing techniques are IH, ST, and 2H (Figure 3). They all directly reveal real hand configuration rather than using indirect representations of penetration.

|

|

| Implications: An Updated Interaction Guideline |

| Standard 3D interaction guideline: Avoid penetrating visuals. New guideline: Provide interpenetration cues. Moreover, for grasping: • Subjectively, cues augmenting constrained visuals are promising. • Performancewise, direct rendering of interpenetration can be better. • Reasonable tradeoffs can be found |